FuriosaAI has officially unveiled its highly anticipated second-generation artificial intelligence chip, dubbed Renegade, or RNGD. This strategic launch on Thursday targets the rapidly evolving industry shift from intensive model training to high-volume, cost-critical AI inference workloads, promising substantial efficiency gains for data centers globally.

The innovative RNGD chip, which commenced mass production in January, is specifically engineered to efficiently process massive volumes of real-time AI queries. Its design prioritizes significantly lower power consumption and reduced operational costs, making it ideal for scalable AI deployments.

Addressing attendees at the Renegade 2026 Summit in Seoul, FuriosaAI CEO Paik June-ho highlighted the critical market trend: “By 2030, we project an addition of approximately 100 gigawatts of AI data center capacity worldwide, with a substantial 70 percent of this capacity dedicated to inference tasks.”

Paik further emphasized, “The fundamental principle of future data center architecture will hinge on how effectively operators can execute repetitive AI inference workloads while achieving the absolute lowest total cost of ownership.”

In robust benchmarking conducted with international clients, FuriosaAI’s RNGD demonstrated remarkable performance, handling up to 7.4 times more concurrent users than Nvidia’s RTX Pro 6000 at an equivalent power level. With a thermal design power (TDP) of approximately 180 watts, the company projects that RNGD can facilitate an estimated 40 percent reduction in overall data center total cost of ownership (TCO).

“The expansion of the AI ecosystem is unsustainable if computing expenses continue to outpace revenue growth,” Paik asserted. “Our core mission at FuriosaAI is to ensure AI computing becomes both sustainable and widely accessible for all enterprises.”

FuriosaAI initiated its silicon development journey for Renegade in 2022, with initial samples becoming available two years later. From its inception, the company placed paramount importance on power efficiency, integrating it as a fundamental design constraint throughout the development process.

“Instead of solely pursuing maximum peak performance, our strategy involved establishing a stringent power envelope – specifically under 200 watts – and meticulously optimizing the chip to efficiently run diverse and rapidly evolving AI models within these predefined limits,” explained Paik.

This year marks a significant milestone as the company has successfully begun mass production of approximately 4,000 RNGD units. This achievement positions RNGD as a rare commercial-scale high-performance Neural Processing Unit (NPU) featuring advanced high-bandwidth memory, ready to meet surging market demand.

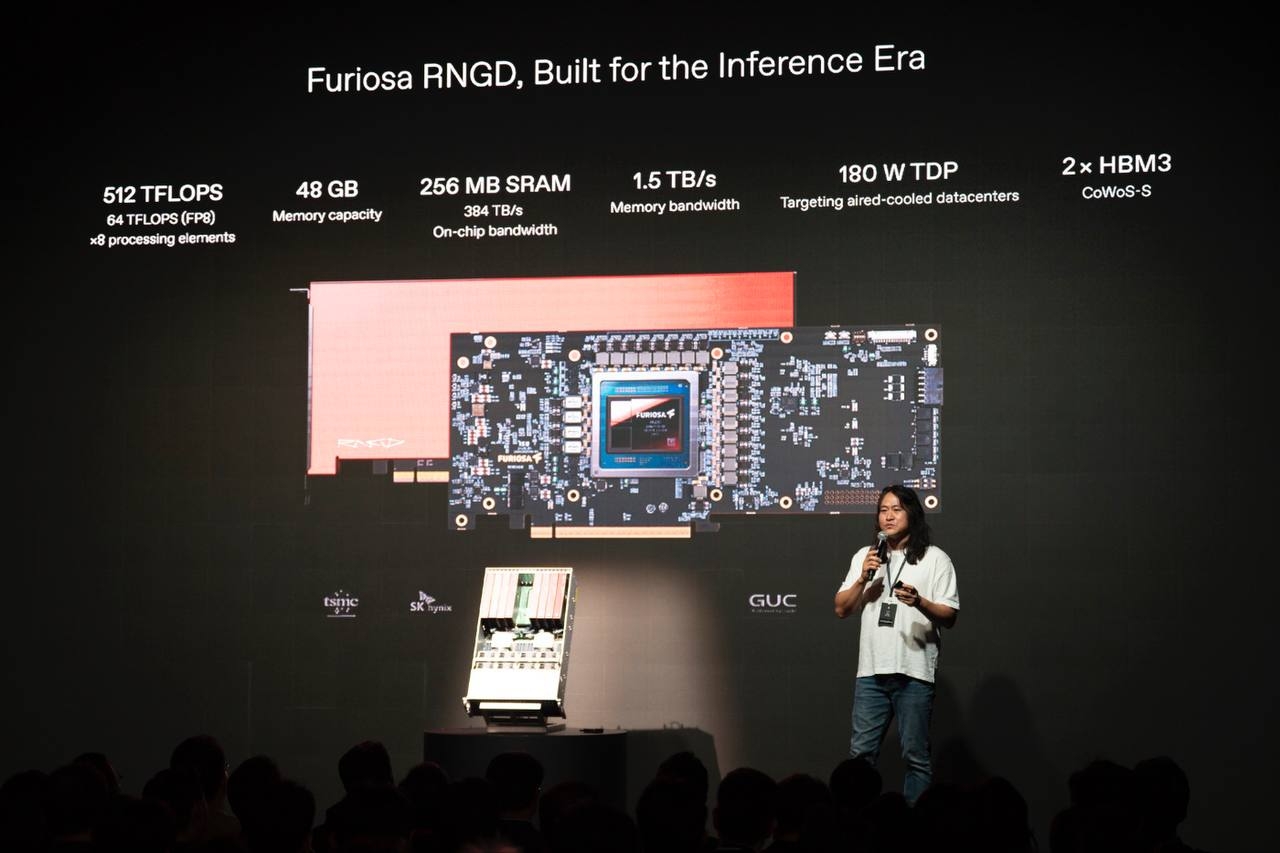

The flagship RNGD chip boasts an impressive approximately 40 billion transistors and is meticulously manufactured on a cutting-edge 5-nanometer process by industry leader TSMC. Complementing its powerful core, the chip is paired with state-of-the-art fourth-generation high-bandwidth memory (HBM3) supplied by SK hynix, ensuring optimal data flow and processing capabilities.

FuriosaAI confirmed that each RNGD chip delivers up to 512 teraflops of raw compute performance, combined with an exceptional memory bandwidth of roughly 1.5 terabytes per second, enabling unparalleled speed for AI inference applications.

Beyond its advanced hardware, FuriosaAI is committed to expanding its comprehensive software stack, which includes specialized compilers and Software Development Kits (SDKs) engineered for scalable inference architectures. Major partners, including LG AI Research, LG Uplus, Samsung SDS, and MegazoneCloud, showcased compelling deployment use cases, underscoring the chip’s versatility and real-world applicability.

In a groundbreaking development, Samsung SDS has announced plans to launch a subscription-based neural processing unit (NPU) service in July, leveraging FuriosaAI’s RNGD chips. This initiative marks a significant milestone as the first such offering from a domestic cloud provider in South Korea.

The upcoming service will empower customers to seamlessly access RNGD chips through the Samsung Cloud Platform, benefiting from flexible configurations and deep integration with existing storage, networking, and compute services. This strategic move not only diversifies Samsung SDS’ current GPU-based cloud offerings but also actively supports South Korea’s national drive towards establishing robust “sovereign AI” infrastructure.

Vice Minister Ryu Je-myung, speaking at the event, lauded FuriosaAI’s potential: “FuriosaAI stands as one of the most formidable contenders poised for success in the global AI landscape. The RNGD chip is strategically positioned to secure an irreplaceable role within the global supply chain, particularly as we enter the transformative era of physical AI.”